Not ok, Computer.

About which I know nothing; so I write to figure it out.

At this moment my handbag contains: my wallet, my passport, a power adapter for my computer, a power adapter for my phone, a portable charger I never remember to charge, three types of pain killers (the Russian Nurofen, Ibuprofen, and CVS brand menstrual pills), two sanitary pads should the need for their use suddenly befall me, a small bottle of perfume, two lipsticks, two lipliners, two caps from lipliners no longer in this bag, six lipglosses, a metal and an acetate french pin which work well for holding my hair up only when I wear it curly, single-use pouches of shampoo and conditioner, a bottle of nail polish, a nail file and a cuticle pusher, a cardboard packet of brand new bobby-pins, six loose bobby pins (tarnished and bent), a set of housekeys, keycards from the hotel in Miami and from the hotel in LA, a bottle of Dry Hands, a thumb-sized pink ceramic cat that my niece brought me from a town on the banks of the Volga called Myshkin, a fluorescent pink pen, notes I took during a tarot reading at the end of January because I had found myself at my wit’s end, some loose coins, a tangled necklace. Can AI do this? Methinks not.

It is against my own best judgment that I am attempting to articulate anything about “AI,” but I wanted to revisit something I expressed, off-handedly, a few weeks ago in recommending this piece on Anthropic in the New Yorker, which is that I do not care about or feel particularly threatened by LLMs.

The news of the past few weeks, accompanied by a barrage of simultaneous writing on AI and its effects on reality outside of the computer, has prompted me to reconsider my dismissive attitude. It’s not that I wholly disagree with the sentiment I expressed previously, but it is proving to be true that a refusal to engage with the matter is, in fact, somewhat irresponsible, if not in broad, global terms (I prefer to keep my delusions of grandeur at an arm’s length), then on the scale of one’s individual life. And in any case, it is proving impossible not to think about. The news and writing in question: Anthropic’s standoff with the DoD and Open AI’s quick swoop into the empty space left by its competitor; the Sam Kriss piece in Harper’s about the cheating software Cluely and the “agentic” young men in San Francisco, the aforementioned piece by Gideon Lewis Kraus, this piece about romance “novelists” producing their churnings-out with the aid of Claude, Becca Rothfeld’s resonant screed against LLMs, Catherine Lacey’s also impassioned treatise against their smoothing of reality, Sophie Haigney’s recent piece about AI animal videos, Anna Wiener’s report on AI companions, Elvia Wilk’s very good argument on why the handwringing over the quality and nature of LLM writing is besides the point. Every day, really, there’s someone pointing out the ways that this still new technology is distorting our perceptions or our ability to think, or how it will decimate employment as we know it, how it’s changing the way wars are fought, amplifying the human capacity for violence to a numb, hollow echo, how we don’t really fully know yet of what AI itself is capable.

My kneejerk response to the whole ordeal when I give it more thought, is still to disengage completely, run away to the remote countryside, begin homesteading and all that jazz. But it is quite clear at this point that it is rather irreversibly part of our lives now: quite soon there will be adults who don’t remember a life before ChatGPT, and in as little as fifteen years there will be those who had never known the world without it. And if you, like me, have a day job/office job/email job/whatever-you-want-to-call-it, it’s likely that most software products you use to do said job integrate AI in some capacity, however clumsy it may be. So while my instinctual reaction no longer feels entirely appropriate, neither does the moral panic that proposes refusal and abstinence as the ultimate solution to the AI question (see: Becca Rothfeld). Again, I don’t entirely disagree with its proponents; but it is not a reasonable, let alone plausible, expectation that the median user/consumer will take a moral stance against something that inarguably makes their life easier, however it is that it does so, if the hegemony of tech has taught us anything.

To be clear, there is plenty to panic and to moralize about — by now, as wars everywhere flare, the perilousness and monstrosity of AI’s use in them should be clear and inspire profound fear; with this stuff I always think about how technological advances cloaked in the notion of “progress,” are just a way to make horrors heretofore unthinkable an efficient reality, to paraphrase (if not mangle) Adorno. This should be enough, the chief reason, really, to abstain from the use of AI; but it would also be naïve to think that one’s individual boycott would save civilian lives in a war. At most, it is a refusal to become a source of extraction of whatever “intelligence” it may adopt, while also saving a little bit of water, which, personally, I’ll take but I will not harbor any illusions about it having any impact in the grand scheme of things: in posting this on the internet, I negate these efforts anyway (or such is my understanding).

As many, at this point, have pointed out, the corner of this where users have the most constructive agency is that of labor, and organizing, indeed seems to be the answer: no matter if one is of the creative persuasion, professionally speaking, or holds a pink-collar day job, or works in tech, whatever that might mean anymore—AI’s threats to one’s livelihood are equally existential, while abstinence, especially in junior and mid-level positions, puts workers at a disadvantage with expectations of productivity (or, at the very least, the perception of it) having ramped up largely with the advent of AI. In any case, I’m not suggesting anything novel, and I don’t know if it even really needs to be said in this forum anymore.

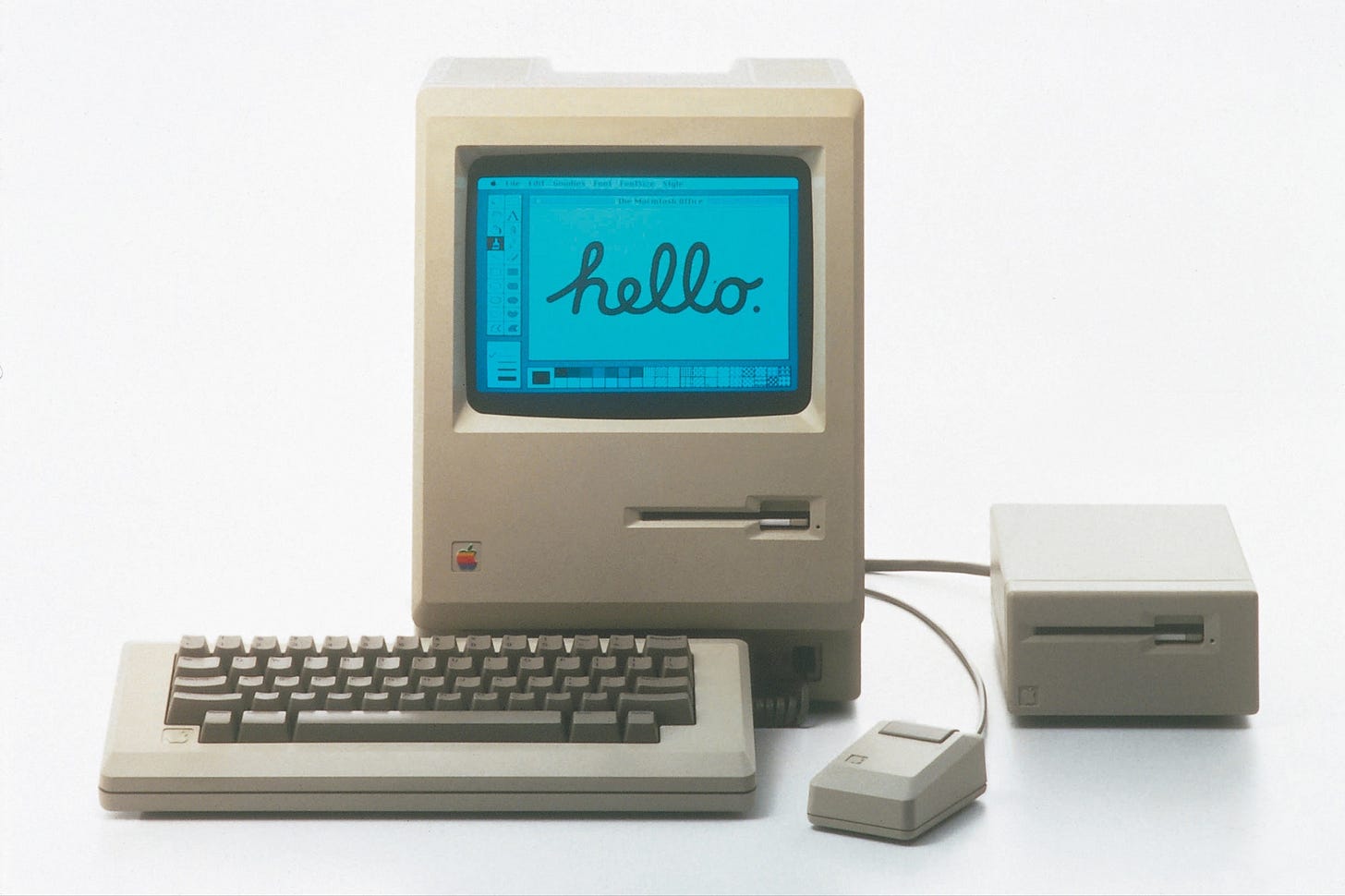

What was the compulsion to start this little ditty with an inventory of the contents of my handbag? Well, it’s quite simple: for as much as this has occupied my thoughts the one thing to which I kept returning, which is that no matter how well it will learn to imitate human activity as mediated by a screen, LLMs can never approximate or, much less, know, what it is to be human out in the wide world outside the web, where our intelligence (should we be so lucky to have any) is accompanied by the animal reality of having a body. While they can—rather competently—extract from the vast bodies of text that describe this reality, they do not know the sudden rush of pleasure that we identify as fun, or the acute discomfort of social anxiety as manifested in sweaty palms and disorderly, truncated thoughts. The contents of my bag are the material traces of the life I live outside of the computer and while superficially they may categorize me as a certain kind of person in ways that AI, too, might be able to recognize, the narrative undercurrents of my biography that they suggest, can really only fully be articulated by me, if I choose to do so. I think this charming list by Allegra Samsen just about summarizes it in its first bullet point: AI cannot kiss with tongue (or at all).

This comes back to the question if AI “can write good,” as put by Wilk’s aforementioned essay, which is, ultimately, a pointless one to pose. AI, possibly sooner than later, will write fine, but the thing is that it will only ever write when prompted. We, the human writers, can’t really help it; even as career prospects in this racket continue their dwindling, we’ll keep doing this because it carries some existential necessity for us. I’m willing to wager that the majority of the world’s writers likely do not depend on their writing to make a living; whether that is unfortunate or not is not for me to say—I am, and will likely be for a long time, a publicist for most of my days’ waking hours. And still I, like many others in my position, feel a compulsion to keep doing this. Sometimes the purpose is to understand what it is that I think about something; in the case of AI, I have found, it is that I think it is profoundly boring. I vow to never write about it again.

As for the swamp of textual “slop” we are now confronted with — well, I’ll say, that there have always been bad writers, and it can not be taken for granted that anything that someone deemed worth publishing will be good. Most of it never was.

The pink ceramic cat from Myshkin. The two caps from lipliners no longer in the bag. This is the piece I didn't know I needed to read today. Because I've been sitting with something almost opposite to your conclusion: not that absorbed language can't carry something true about being human, but that sometimes it can, that a voice can catch something from the outside world and come out the other side more itself rather than less. But I think we might be talking about the same thing from different ends. You're defending the ceramic cat. I'm just saying sometimes the ceramic cat gets in anyway, through the words ♥